Summary

If you're hosting your Ghost blog in Azure Web Apps then you're probably not backing up your database, even if you think you are; I wasn't, but I am now.

Here is a simple Azure WebJob (written in .NET Core 1.0) to enable you to backup your SQLite database with zero downtime (i.e. without taking your blog or app offline).

As always, the code is all in GitHub, but this time I've also included a zip file containing the finished WebJob so you can upload it straight to your website without having to build it first.

Background

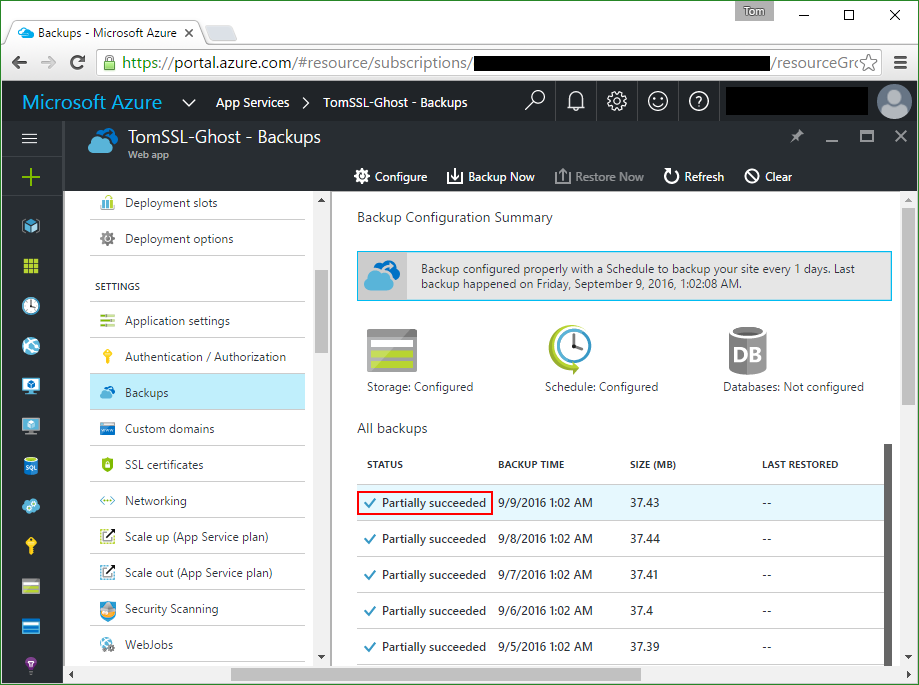

When I first installed my Ghost blog in Azure at the end of 2014 (literally), I also set up a nightly backup job in the usual fashion using the Azure Portal.

The other day I wanted to do some work on my Ghost installation, so I went to grab one of the backups to install on my machine at home and noticed this:

Look at the bit in red: Partially succeeded

Given the title of this article, I daresay you can guess which file was not backed up successfully.

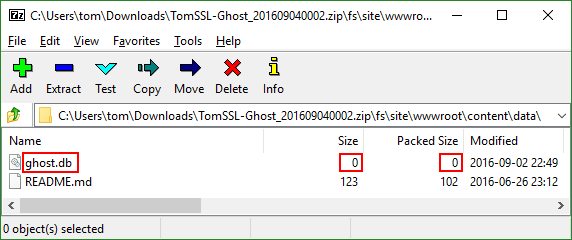

Actually, I've been slightly misleading about the sequence of events. What I really did first was to run Microsoft Azure Storage Explorer and grab the latest backup.

Microsoft Azure Storage Explorer

If you're not already using Microsoft Azure Storage Explorer, you should go and get it right now[1] from http://storageexplorer.com/. Amongst other things, it makes uploading and downloading files to and from your Azure Storage accounts a point and click affair.

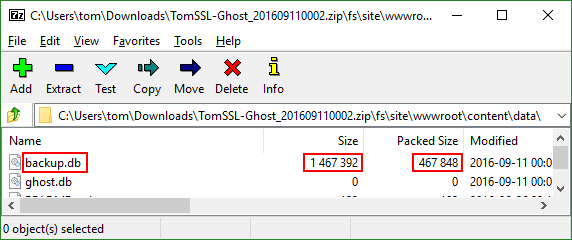

Then I opened the zip file and noticed this:

That led me back to the Azure Portal and... you know the rest.

I'm not backing up my database. Now what?

A quick search on Google[2] found lots of helpful suggestions that all involved either manually exporting the contents of the blog as a json file (through the admin application, making automation very difficult), or stopping the blog from running altogether (to relinquish the lock on the database file), neither of which was acceptable to me. Of course a few seconds of downtime for my blog probably doesn't matter, but I wanted to do it properly.

As luck would have it, SQLite has an online backup API, but unfortunately it hasn't been added to sqlite3 (the npm package used by Ghost). I hunted around for a bit and decided that it wasn't going to be very easy to get something running in Node.js to perform the backup.

C# and Azure WebJobs to the rescue

Finally I found this Stackoverflow question which not only confirmed that the online backup API was present in System.Data.SQLite, it even provided a snippet of C# code to do it.

It occurred to me that I could create a simple console application and upload it as an Azure WebJob which could easily be scheduled to run each night and copy the database to a new file, before my main backup ran.

At this point I should probably mention that, rather than making this into a general purpose tool, I wanted to make this code as simple as possible. Remember, I had a live database to backup. This means that, rather than copying the database to a separate storage account, I'm just going to back it up in the same directory and let the normal backup process take care of persisting it after that.

In fact it's so simple and so specific that you're probably thinking I'm an idiot for not writing it as an Azure Function, but unfortunately I don't think that's possible right now. As far as I'm aware, at the time of writing it's not possible to access the local file system of a separate Web App from within an Azure Function.

This is one of those proof of concept pieces which will probably end up in production forevermore.

Here's the code.

using System;

using System.Data.SQLite;

using System.IO;

namespace Ghost_Azure_BackupDb

{

class Program

{

static void Main(string[] args)

{

var path = @"d:\home\site\wwwroot\content\data";

var dbPath = $"{path}{Path.DirectorySeparatorChar}ghost.db";

var dbBackupPath = $"{path}{Path.DirectorySeparatorChar}backup.db";

Console.WriteLine($"Backup running in {System.AppContext.BaseDirectory}");

Console.WriteLine($"About to backup db from {dbPath} to {dbBackupPath}");

using (var source = new SQLiteConnection($"Data Source={dbPath}; Version=3;"))

using (var destination = new SQLiteConnection($"Data Source={dbBackupPath}; Version=3;"))

{

source.Open();

destination.Open();

source.BackupDatabase(destination, "main", "main", -1, null, 0);

}

Console.WriteLine("Backed up db successfully");

}

}

}

Notice that I've tried to make it look clever by using string interpolation, but it's still very simple indeed. It uses the System.Data.SQLite.Core nuget package, but no others.

How to install the WebJob

You need to place the contents of the bin folder into a zip file and then install the zip file as a WebJob inside your existing Azure Web App in which you're hosting your Ghost blog.

WebJobs using the SDK require a storage account to store log files, with the connection string being stored under Application Settings. However, in the case of the simple console application we're going to use, this isn't needed, so we can skip this stage. In other words, I forgot to create the storage account and it still worked.

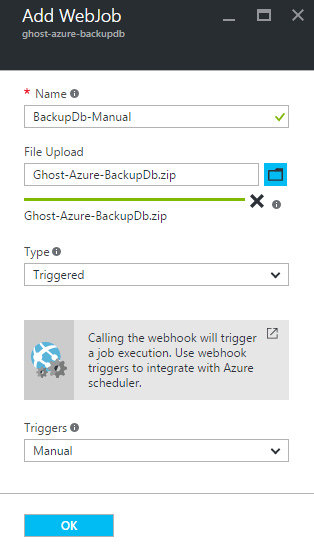

In order to install the WebJob into your existing Web App you just need to navigate to your Web App and then to the WebJobs blade in the Azure Portal and click on +Add.

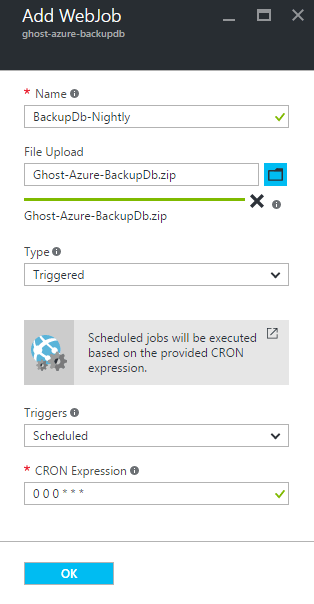

Give the job a suitable name, upload the zip file containing the console app and the SQLite dlls and select a job type of Triggered with a Manual trigger. Like this:

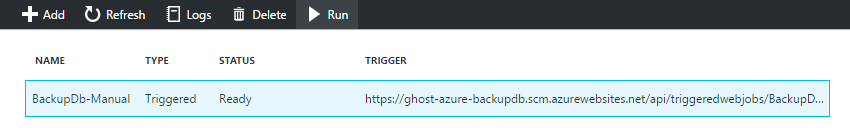

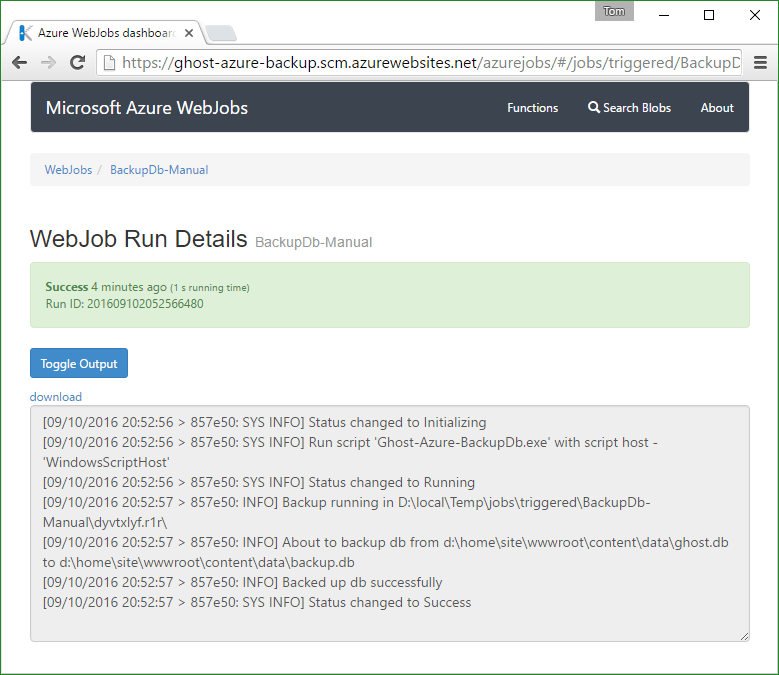

Now test your job by selecting it and clicking Run. Don't worry, you need to be logged in to run it.

Make sure you've included all of the SQLite dlls

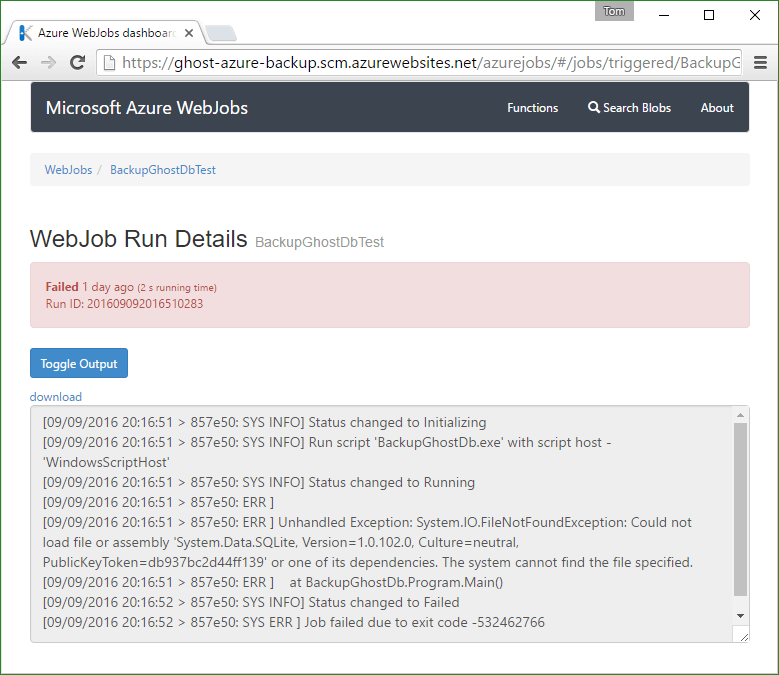

When I first uploaded my WebJob it failed like this:

I thought I'd put all the necessary files into the zip file, but clearly not. I'd failed to notice the SQLite.Interop.dll file inside each of the bin\x86 and bin\x64 folders of my console application.

Once I included those folders (and their contents) in my zip file, it worked.

If you're running Ghost in 32-bit mode (as advised by Scott Hanselman), you could delete the x64 folder from the zip file.

If you really wanted to, you could put the 32-bit version of SQLite.Interop.dll in the root of the zip file and do this in your code:

Environment.SetEnvironmentVariable("PreLoadSQLite_BaseDirectory", System.AppContext.BaseDirectory);

Incidentally, I've had the missing SQLite.Interop.dll issue before. Last year I wrote an article entitled see your SQL queries when using NHibernate with LINQPad in which the sample code used a SQLite database and I encountered the same problem.

An extra point to mention is that, once you specify the base directory, the application can't automatically select the correct version of SQLite.Interop.dll based on the processor architecture, so it's probably more trouble than it's worth.

My advice is to leave well alone. The zip file in GitHub works and it contains versions of

SQLite.Interop.dllfor 32- and 64-bit processors.

Success

Now I have a new file called backup.db. Ordinarily I'd name it something like backup_yyyymmdd.db, but I'm deliberately overwriting it each time so I don't end up with loads of files. Remember, I am taking a real backup of everything each night, so I'll have nightly versions of backup.db.

Scheduling the backup

Just create another WebJob, call it something like BackupDb-Nightly and upload the same zip file again.

Remember the syntax for the CRON expression (which is explained in some detail here), specifically the fact that it is configurable to the second and is of the format:

{second} {minute} {hour} {day} {month} {day of the week}

Thus taking a nightly backup at midnight (remember it needs to be taken prior to the normal Azure backup, which in my case runs at 1:02am) is simply:

0 0 0 * * *

I did this and the next time I downloaded my nightly backup it contained a proper copy of my database.

Things to remember

- This is not production code (except I'm using it in production).

- The paths are hard coded for Ghost in Azure.

- It doesn't run as a Singleton, so if you end up with simultaneous scheduled and manual runs it might go wrong. In practice this would be very difficult to achieve and it won't corrupt the original database; it just might not back it up properly.

- Don't enhance it and add fancy error handling. This is a very simple console application so, if it goes wrong, let it fail and let Azure WebJobs handle it all for you[3].

- You should be running your Ghost blog in 32-bit mode (and probably everything else, too).

- If you're going to put

SQLite.Interop.dllsomewhere else, remember to include the right version (probably the 32-bit version - see previous point) and to set the environment variable in your code. But you probably shouldn't. - Using http://storageexplorer.com/ makes it really easy to check your backup worked.

- Yes, I know I can backup my data by exporting it as a json file from within Ghost, but this is more reliable and can be scheduled to run automatically. In any case, you might be using this for a different app.

- In order to guarantee to run your WebJobs successfully, your Web App needs to be Always On. But that's also true for it to have zero downtime in the first place, so the point is moot. Furthermore, if the Web App is not running, then the normal backup will succeed.

- You might be tempted to tweak the code and get it to restore your database with zero downtime, but remember that sometimes Ghost needs to run database migration scripts, so doing this might put your blog into maintenance mode where it will serve a 503 page for several minutes UPDATE - Since Ghost 0.11.0 was released on 2016-09-15 the database migration is much quicker.

- Your nightly backup will still think it's been partially successful, but you will have a full backup of your database.

- Beware timezones. My backup seems to run at BST and my WebJob seems to run UTC. So midnight and 1:02am (partially successful backup time seen in screenshot) are actually two minutes apart.

Further work

If you're using the WebJob to backup the database for something other than Ghost then you'll almost certainly need to change the path variables. If I ever need to do that, I'll make them configurable without having to recompile the WebJob. I could make this into a proper Azure WebJob using the SDK, with different hooks for manual and scheduled backup and restore, etc. I did consider this, but it would have made it a much larger application with a lot more dependencies.

Ideally, I'd like to revisit this and write it as an Azure Function, but as far as I'm aware, it's not possible to access the file system of a separate Web App from an Azure function and I didn't think I could run the Ghost database from a separate fileshare (certainly not without modifying the Ghost source code). Unlike WebJobs, Azure Functions are grouped together inside separate Web Apps. In fact they are often referred to as being "serverless" code, which supports the idea that they can't access the local file system of a separate Web App. If anybody knows that this is possible, please let me know.

Finally, if you think this looks like it could form the basis of an article about automatically keeping your Azure Ghost installation up to date with zero downtime, you'd be right. Expect that article very soon.

Conclusion

If you're running a Ghost blog in Azure using the default SQLite database (which you should be, as it's excellent) then, unless you're stopping your blog each time you make a backup, you are probably not backing up your database. This is true for any other web application which uses SQLite, too.

In this article we have seen a simple Azure WebJob which enables you to backup a SQLite database from a running application with no downtime and which doesn't cost any money to run. The source code is available on GitHub at https://github.com/TomChantler/Ghost-Azure-BackupDb, as is the compiled executable, packaged in a zip file ready to be uploaded to Azure.

If you're running Ghost in Azure Web Apps, it'll take you a couple of minutes to make sure you are making proper backups. You should do that.

If you found this article useful, you can comment below, subscribe or follow me on Twitter. Follow @TomChantler

Or immediately after you've finished reading this article. Definitely soon. In any case, the link opens in a new tab. ↩︎

Or Bing, of course. But it was Google. ↩︎

At one point I made the mistake of handling the errors gracefully and then the job said it had completed successfully. It wasn't until I examined the log that I saw that it hadn't.

↩︎